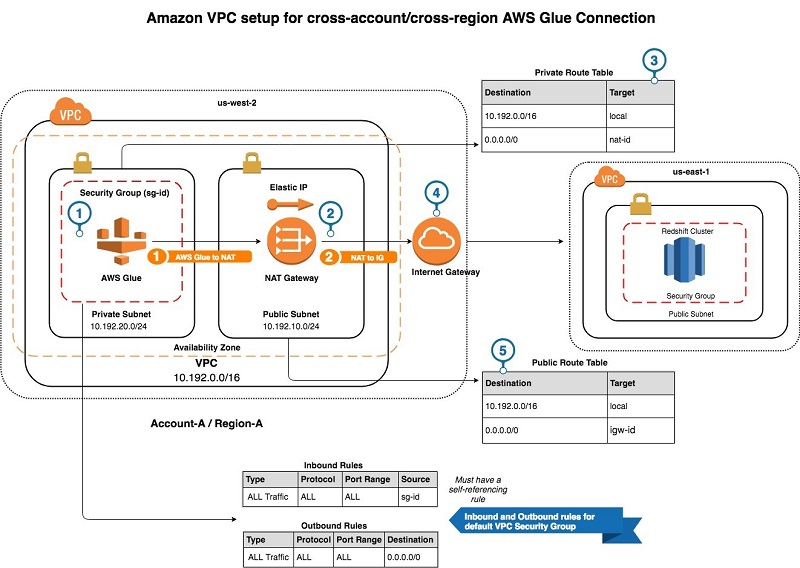

In Glue Studio, you can use a visual ETL job to read or write to a Redshift data warehouse simply by selecting a Redshift connection to use within a built-in Redshift source or target node. To learn more, see Use Spark on Amazon Redshift with a connector in the AWS documentation.ĪWS Glue When you use AWS Glue 4.0, the spark-redshift connector is available both as a source and target. Assuming both Amazon Redshift and Amazon EMR are in the same virtual private cloud (VPC), you can create a Spark job or Notebook and connect to the Amazon Redshift data warehouse and write Spark code to use the Amazon Redshift connector. If Amazon Redshift and Amazon EMR are in different VPCs, you have to configure VPC peering or enable cross-VPC access. Sales_df.join(date_df, sales_df("dateid") = date_df("dateid")) Reference the date table from Redshift using Data Frame Sales_df.createOrReplaceTempView("sales") Reference the sales table from Redshift Val jdbcURL = "jdbc:redshift:iam://:/?DbUser=" Create the JDBC connection URL and define the Redshift context Use IAM-based credentials for connecting to Redshift and use IAM role for unloading and loading data from S3. Here is a Scalar example to build your applications both with Spark Dataframe and Spark SQL. You can use EMR Serverless to create your Spark application using the emr-6.9.0 release to run your workload.ĮMR Studio also provides an example Jupyter Notebook configured to connect to an Amazon Redshift Serverless endpoint leveraging sample data that you can use to get started quickly.

Select the emr-6.9.0 release when you create an EMR cluster on Amazon EC2. To use this with Amazon EMR, you need to upgrade to the latest version of the Amazon EMR 6.9 that has the packaged spark-redshift connector. The following diagram describes the authentication between Amazon S3, Redshift, the Spark driver, and Spark executors.įor more information, see Identity and access management in Amazon Redshift in the AWS documentation.Īmazon EMR If you already have an Amazon Redshift data warehouse and the data available, you can create the database user and provide the right level of grants to the database user. EMR 6.9 provides a sample notebook, and EMR Serverless provides a sample Spark Job too.įirst, you should set AWS Identity and Access Management (AWS IAM) authentication between Redshift and Spark, between Amazon Simple Storage Service (Amazon S3) and Spark, and between Redshift and Amazon S3. In this launch, Amazon EMR 6.9, EMR Serverless, and AWS Glue 4.0 come with the pre-packaged connector and JDBC driver, and you can just start writing code. Getting Started with Spark Connector for Amazon Redshift To get started, you can go to AWS analytics and ML services, use data frame or Spark SQL code in a Spark job or Notebook to connect to the Amazon Redshift data warehouse, and start running queries in seconds. As we make further enhancements we will continue to contribute back into the open source project. We thank the original contributors on the project who collaborated with us to make this happen. Your applications can read from and write to your Amazon Redshift data warehouse without compromising on the performance of the applications or transactional consistency of the data, as well as performance improvements with pushdown optimizations.ĪWS Week in Review – Generative AI with LLM Hands-on Course, Amazon SageMaker Data Wrangler Updates, and More – July 3, 2023Īmazon Redshift integration for Apache Spark builds on an existing open source connector project and enhances it for performance and security, helping customers gain up to 10x faster application performance.

With Amazon Redshift integration for Apache Spark, you can get started in seconds and effortlessly build Apache Spark applications in a variety of languages, such as Java, Scala, and Python. Today we are announcing the general availability of Amazon Redshift integration for Apache Spark, which makes it easy to build and run Spark applications on Amazon Redshift and Redshift Serverless, enabling customers to open up the data warehouse for a broader set of AWS analytics and machine learning (ML) solutions. These third-party connectors are not regularly maintained, supported, or tested with various versions of Spark for production. Spark application developers working in Amazon EMR, Amazon SageMaker, and AWS Glue often use third-party Apache Spark connectors that allow them to read and write the data with Amazon Redshift. Post Syndicated from Channy Yun original Īpache Spark is an open-source, distributed processing system commonly used for big data workloads.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed